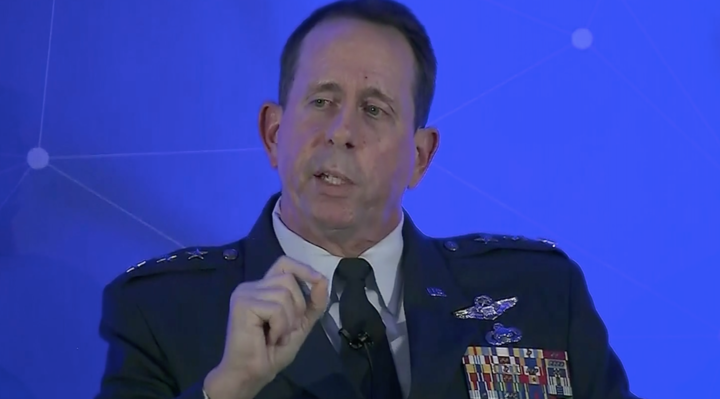

‘Deep fakes’ pose a major threat to elections and even world peace, Air Force Lt. Gen. John Shanahan, Director of Joint Artificial Intelligence Center, U.S. Department of Defense has warned.

“What if a senior leader was to come on and announce that the nation was at war, but it was a deep fake?,” he asked.

“It’s something I think a lot about because the level of realism and fidelity has vastly increased from just a year ago,” the Director of the US Joint Artificial Intelligence Center said.

“People have such a growing cynicism and scepticism about what they’re reading, seeing and hearing, that this could become such a corrosive effect over time that nobody knows what is reality anymore.

“Those are areas that are of increasing concern across the whole of society, not just the US military.”

“Within probably 30 minutes you can get online and start developing fairly high fidelity deep fakes,” he said.

The General also sought to provide reassure that as AI capability developed, the US military would not seek to develop autonomous “killer robots”.

“There are aspects of AI that feel different – the black box aspect of machine learning – but overall we have the process and policies in place to ensure that we [stick to] the laws of war, rules of engagement and proportionality.

“We will not violate those core principles.

“Humans will be held accountable. It will not be something that we say ‘the black box did it, no-one will be held accountable’. Just like in every mistake that has happened on a battlefield in our history, there will be accountability.

“We are not looking to go to this future of..killer robots: unsupervised, independent, self-targeting systems.

“Lethal, autonomous weapon systems, right now for the Department of Defence, is not something we are working actively towards.”

Below is a full rush transcript of the press conference by Air Force Lt. Gen. John Shanahan, Director of Joint Artificial Intelligence Center, U.S. Department of Defense.

Lt. Gen. Shanahan: I am Lt. Gen. Jack Shanahan, the Director of the U.S. Department of Defense Joint Artificial Intelligence Center, also known as the JAIC, located in Washington, D.C. I’m joined on the call by our Chief Technology Officer, Mr. Nand Mulchandani. Nand joined our team last year after an impressive 25-plus-year career in Silicon Valley, where he founded, sold, and bought several tech startups and guided them to success. He has exactly the kind of experience and technical expertise that is so important for a new government organization like the JAIC that is trying to adopt emerging technologies such as artificial intelligence.

Nand’s invaluable insights and contributions to the JAIC and his presence with me on this trip underscore the importance of cooperation between the commercial technology sector and the military. These partnerships are vital to the future of our shared values in this era of emerging and disruptive technologies. In fact, more than ever before, our militaries are looking to the commercial sector to help us integrate AI-enabled capabilities that are safe, ethical, reliable, and aligned to our respect – with our respect for international law and human rights.

This week we are meeting with NATO and European Union leaders to discuss how AI will transform our respective militaries in the coming years. From the U.S. viewpoint, we see AI as a transformational technology, one that will help preserve the strategic military advantage that the United States and our allies and partners in NATO have enjoyed for more than 70 years. In that respect, we recognize the value of AI for a wide range of capabilities across the full spectrum of the defense enterprise in a manner that is joint and interoperable with our allies and partners.

Europe, like the United States, thrives on a vast marketplace of ideas and freedom of expression. We recognize that there are skeptics on both sides of the Atlantic concerning the military applications of AI. As you might imagine, I have a pragmatic worldview on AI. I compare AI to electricity or to computers. Like electricity, AI is a transformative, general purpose technology. AI is capable of being used for good or for bad, but is not a thing unto itself. In essence, AI equates to machines that perform as well or better than humans in a variety of functions. For the U.S. and our allies, our most valuable contributions will come from how we use AI to make better and faster decisions and optimize human-machine teaming.

The U.S. envisions that as AI in its different fields such as machine learning and natural language processing mature, it will help commanders in the field make safer and more precise decisions during high consequence or mission critical operations. We also believe that AI will help create a more streamlined organization for so-called back-office functions by reducing inefficiencies from manual, laborious operations, with the objective of simplifying workflows and improving the speed and accuracy of repetitive tasks.

We have a lot of work to do in the U.S. military in this regard. Success with AI adoption requires a multi-generational commitment with the right combination of tactical urgency and strategic patience. The U.S., along with our NATO allies, will face difficult decisions regarding the future of legacy systems and platforms in an era where technological innovations are transforming every aspect of the human experience. We are entering a new era of global technological disruption, one that is fueled by data, software, AI, cyber, and cloud, with 5G soon to explode globally. The pace of change is breathtaking. With no end in sight to the speed or scope of change, the United States understands that we must embrace this technological transformation to meet future global security challenges.

This is why the United States military is prioritizing the acceleration of AI adoption. In fact, Secretary of Defense Mark Esper started – stated publicly that AI is his number one technology modernization priority. Our response is spelled out in the U.S. administration’s American AI Initiative and the Department of Defense AI Strategy, the unclassified summary of which was released last February and is available online. These are the documents that explicitly called for the creation of our Joint AI Center, which serves as the focal point for execution of our AI strategy.

Broadly, the JAIC has three major roles. First, we are developing and delivering AI capabilities that make use of existing AI-enabled technologies from commercial industry and academia. We have six AI projects underway, each of which represent areas where we know there is off-the-shelf AI technology that can be modified for Department of Defense missions. These missions range from humanitarian assistance and disaster relief to aircraft predictive maintenance, cyber defense, warfighter health, intelligent business automation, and our priority project for the next year, joint warfighting. With our mission initiatives, we’re looking for projects that can demonstrate initial return on investment in one to four years.

Our second major role is working on the long-lead items or foundational building blocks that are preventing wider adoption of AI technology throughout the U.S. military – critical first steps, including building an enterprise cloud-enabled platform, human capital management, acquisition reform, and data management. With its formal launch in 2020, the JAIC’s AI platform as a service, which we call the Joint Common Foundation, will lower the barriers to entry for AI developers and users throughout the Department of Defense.

Presently, much of the best commercial AI technology is being developed through open-source tools that demonstrate high performance but also contain significant cyber vulnerabilities. We want AI developers to have easy access to these open-source tools, but given today’s ubiquitous cyber threat, we are taking steps to ensure cyber security is considered at every stage of the AI delivery lifecycle.

Our third role is to serve as the DOD’s AI center of excellence, managing the DOD-wide governance process for AI to address areas such as acquisition reform as well as key policy questions, to include how we use AI-enabled capabilities safely, lawfully, and ethically. Through the JAIC’s role in AI governance, we are diligently reviewing recommendations from organizations such as the United States Defense Innovation Board, an independent forum of subject matter experts from private industry and academia, along with the national – U.S. National Security Commission on AI, to adopt ethical standards and principles for AI adoption that are aligned with our nation’s values but still enable the United States and our allies to maintain a strategic military advantage through the use of AI-enabled technology.

We are keenly aware that our strategic competitors are embracing this technological revolution and are moving very deliberately towards a future of artificial intelligence. Many of the AI applications of both Russia and China run in stark contrast to the values of Europe and the United States, and raise serious questions regarding international norms, human rights, and preserving a free and open international order. At the same time, we are concerned that some countries in Europe are at risk of becoming immobilized by debates about regulation and the ethics of the military use of AI. We recognize that there are legitimate ethical concerns with any military technology, but we have crafted an approach that allows us to move forward in adopting the technology in parallel with addressing these ethics concerns.

Given the importance of the NATO alliance, we desire a future that enables digital-age cooperation and interoperability between the U.S. and NATO while respecting and honoring the strong commitment to safe, responsible, and ethical uses of technology.

From the U.S. viewpoint, the best way to preserve responsible and ethical values in AI military technology is to work alongside our allies and partners to provide global leadership in this consequential field. And the stakes could not be higher for both the United States and NATO. We are encouraging our allies to work with us to develop and implement strong AI principles for defense.

Russia and China are cooperating on AI in ways that threaten our shared values and risk accelerating digital authoritarianism. For example, China is utilizing AI technology to strengthen censorship over its people and stifle freedom of expression and human rights. China is also facilitating the sale of AI-enabled autonomous weapons in the global arms market, lowering the barrier of entry of potential adversaries and potentially placing this technology in the hands of non-state actors. Perhaps most concerning, Chinese technology companies, including Huawei, are compelled to cooperate with its Communist Party’s intelligence and security services no matter where the company operates.

Russia’s use of AI for national security has been characterized not so much by superior technology but by a greater willingness to disregard international ethical norms and to develop systems that pose destabilizing risks to international security. Russia is also pursuing greater use of machine learning and automation for its global disinformation campaigns as well as lethal autonomous weapons systems.

These security challenges and the technological innovations that are changing our world should compel likeminded nations to shape the future of the international order in the digital age, and vigorously promote AI for our shared values. AI, like the major technology innovations of the past, has enormous potential to strengthen the NATO alliance. The deliberate actions we take in the coming years with responsible AI adoption will ensure our militaries keep pace with digital modernization and remain interoperable in the most complex and consequential missions, so that we can continue to rely on the collective security architecture that has preserved peace, prosperity, and stability in Europe and beyond for decades.

Over the long term, as electricity and computers did for us in the past, I believe that AI technologies will set the stage for transforming the NATO alliance. The future of our security and freedoms depends on it.

Question: What are the kind of safeguards that you think should be put in place to make sure that the technology that you are developing or commercial players are developing won’t end up in the hands of malicious actors or in the hands of countries, organizations where it’s not supposed to be?

Lt. Gen. Shanahan: Well, I think Nand will want to talk on this as well. Because this technology is coming largely from commercial industry in terms of how rapidly it’s being developed and fielded, we have serious concerns about non-state actors and their ability to grab these capabilities from the open-source market. That is an area which we are beginning to place a lot more attention on to protect the technology not getting out into other non-state-actor hands for ill purposes.

That’s not easy to do because it is so widely available. And I can’t get into details in this forum, but we are taking steps to look very closely at how that technology could be exported to people that choose to use it for bad purposes. But it is also difficult to do that given how easy it is to develop these technologies today.

I think that’s what makes this different from a lot of technology in the past that was developed by the military and U.S. military allies and partners, and then you had commercial spinoffs. In this case it’s almost the opposite, where it’s the commercial technologies have been developed first and then we’re repurposing those for military purposes. But when you do that, those technologies will be available to almost anyone with not a lot of effort to go get them. That risks a future which destabilizes the international order in the digital age, so I will tell you that we’re looking carefully about how we would prevent proliferation of those capabilities. But I will not pretend that we can do that easily or immediately.

Mr. Mulchandani: Just to add to what the General pointed out and get a little more – deeper into the tech, one thing that makes artificial intelligence algorithms and systems different is the need and presence for data for training. And the algorithms and the toolsets are becoming widely available in an open-source model, which in today’s day and age, the democratization of and availability of these libraries and toolkits – we’re seeing that in crypto, we’re now seeing this in AI. It’s very hard controlling that because, I mean, the world is flat when it comes to software and the proliferation of this.

However, the data – which is a key ingredient in building effective AI models to go do this – is something that every organization owns for themselves, and keeping the data, making sure that the data is curated properly, is stored properly, and controlling access to that is an incredibly important part of this data protection and AI protection systems that we’re putting in place. But that’s actually a key piece that we need to make sure doesn’t get out there.

Question: Will artificial intelligence also be practiced in targeted killings, as we experienced about a week ago? And if it’s so, maybe you could define for me at least how could that be defensive and so, keeping peace?

Lt. Gen. Shanahan: Yeah, so this is an important question. Let me – let me state up front that that is not anything that we’re working on in the U.S. military right now. Accountability is a principle that is first and foremost in any of our weapons technology throughout the history of the Department of Defense. We go through a rigorous and disciplined process through test and evaluation, validation and verification, before we would ever field this. That has been no different than any technology the department has developed in its history. And there are aspects of artificial intelligence that feel different – the black box aspect, say, of machine learning.

But overall, we have a process and policies in place to ensure that we have the laws of war, international humanitarian law, rules of engagement, the principles of proportionality and discrimination and so on, that we will not violate those core principles. AI does not – does not change that. Humans will be held accountable. It will not be something that we say, “The black box did it – no one will be held accountable.” Just like in every mishap or mistake that’s happened in a battlefield in our history, there will be accountability, and that is a core principle of how we’re developing these capabilities. We are not looking to go to this future of what some would say is the worst-case assumption for the “Department of Killer Robots,” of, say, unsupervised, independent, self-targeting systems. None of us are looking for that future. We have a very disciplined approach to fielding those, beginning with lower consequence mission sets, taking the lessons and principles we learned from those lower consequence, less critical missions and beginning to apply them to warfighting operations.

So we – I don’t see the scenario as you described it being something that we’re working for. We have safeguards in place to ensure that that outcome actually doesn’t happen the way that you talk about it.

Mr. Mulchandani: I mean, the laws – the laws on our books are not changed by technology that easily, and AI is another one of these things where it needs to be absorbed into the frameworks and ethical rules and laws in society and what we have as a government. So there’s nothing here we’re planning on that, and just to echo this point: AI is a technology in its infancy, and part of it is every company and organization adopting it is adopting it in a sort of decision-support model for personalization. I mean, think about where AI, this current sort of phase of AI has come from, is ad tech, right, which sort of pushed these sort of boundaries and has created the sort of movement here. All of it is around personalization and support, which is really the projects and things that the General outlined in our opening statements. Those are the projects that we’re working on to learn and absorb this into the mode of operation, which then leads us to more sophisticated use cases, but over time, in the careful way.

Question: You mentioned the challenge posed by China and Huawei, at the same time saying you wanted to cooperate with NATO allies. If a NATO ally like the UK went ahead with awarding a contract to Huawei for 5G, would that undermine that cooperation? Would that – would that be a problem in sharing information, knowhow, skills with an ally?

Lt. Gen. Shanahan: I’ll be careful not to go outside of my qualified – well, my qualifications to answer that question, which is largely a policy question. But let me just get to the broader point. 5G and AI will have a future that will be inextricably linked. Whatever AI looks like today will advance even much faster as 5G becomes promulgated throughout the world. What our concerns are is access to data – as Nand said earlier, if you have access to data, you basically have access to algorithms and can defeat the models – and then how is the data being shared, who is it being shared with.

So I guess my starting point to answer that question would be if that were to happen in a theoretical case, we would have these discussions at a policy level as well as at a technical level to under – to really appreciate the full ramifications of having Huawei in a network anyplace in the world that was touching other allies and partners’ systems. There are a lot of unknowns about this right now. So what safeguards could be put in place if that were to happen? And if there weren’t sufficient safeguards, what could we do to ensure that technology wasn’t stolen and given away to an adversary without even us understanding how it took place?

So I’ll answer that largely as a theoretical as opposed to this is about to happen, but these will be questions we will have to address at a policy level as well as the technical level to understand what might be the case if we’re developing artificial intelligence with a 5G backbone, and then what happens – what are the ramifications of that for all of the countries involved that may be touching that network.

Question: You were talking earlier about the – your concern about the proliferation of some of these AI tools, and I wondered since AI is so broad and there are so many different types of applications, what do you see as having the biggest risk in the short term? Is it, for instance, some of these tools that do predictive modeling, or surveillance tools, things like facial recognition, or some of the tools that can, for instance, mimic human voices or deep fakes, things like that?

Lt. Gen. Shanahan: I know Nand will want to also weigh in. My answer is a couple of those – I think we’re all concerned, deeply concerned, right now about deep fakes. It’s not an area a year ago I was thinking that much about as we stood up the Joint Artificial Intelligence Center. It’s something I think a lot about now because the level of realism and fidelity is vastly increased over just a year ago, using capabilities such as generative adversarial networks to build these capabilities. Now what do we – what do we develop a similar technology to defeat or at least to recognize when a deep fake is happening? We’ve seen the corrosive influence of some of these disinformation campaigns against political election cycles. What if a senior leader were to come on and announce that the nation is at war but it was a deep fake? Those are areas that are of increasing concern across all of society, not just the United States military but they could be used anywhere across the world. They’re not hard to get access to either. I think within probably 30 minutes you can get online and start developing fairly high-fidelity – not perfect but high-fidelity deep fakes. So that’s an area of concern.

Ubiquitous social surveillance, facial recognition is an area that we have a concern about, used by authoritarian regimes. Again, it’s not the technology itself I’m worried about; it’s how the technology is being used. So this aspect of what’s available and how quickly it can be used, and go back to what Mr. Mulchandani had said earlier: It’s the data behind it that really matters more than just the algorithm itself. The algorithm is becoming a commodity. It’s how you train it against the data to develop a model, then the model is fielded, and then how is that model continuously updated based on new information. That’s what matters the most.

But in the near term, I do worry about the deep fake piece of this, and people have such a growing cynicism and skepticism of what they’re reading, seeing, and hearing that this could become such a corrosive effect over time that nobody knows what reality is anymore. So we have to – we have to help develop tools, and there are a lot of big commercial industries as well as startups and DARPA in the United States that are working on how to detect deep fakes and counter them. But those are the areas I’d say in the immediate term I’m worried about.

Mr. Mulchandani: Yeah, and I would add just broader information operations, so deep fakes as a component of a concerted sort of effort. But really going back to sort of our original point, the tools themselves are going to be open-source. We – now, that’s actually somewhat asymmetrical in the sense that we do know that China, all the work that they do in terms of extending or moving those algorithms along, et cetera, we are not going to benefit from those because those don’t get published. Our – in our society and academic and other institutions, most of the toolkits and work that’s been going on in AI gets published immediately and then gets democratized very, very quickly.

So controlling that or trying to put a sort of framework around that, et cetera, is just not going to work. I mean, that tech is out there. So again, data protection, ensuring the accuracy and security of our data – there’s a lot, a lot of work that we’re doing around tests and eval, making sure that the models are performing correctly, but then using that stuff – if adversaries are using that for information operations, offensive operations on the cyber side is another big area of concern. So that’s another set of things that we’ll be working on in terms of defensive technologies or being able to unravel or understand how these – countering many of these things is actually going to be a very active area of research but also development.

Lt. Gen. Shanahan: And before we go to the next question I just want to make another point on this idea of how this technology can be used. The spotlight is on artificial intelligence right now for all the reasons that we live every day. But I think more about how would I combine artificial intelligence and genetic engineering or bioengineering. That actually worries me a lot more in terms of the combination of those together and the proliferation of capabilities, genetically modified or genetically engineered capabilities that promulgate much faster than anybody intended. So it’s not one or the other. How I use those together makes us think about a little bit of a dystopian future that we want to prevent to the max extent that we can.

Mr. Mulchandani: Yeah, which is why ethics and policies and frameworks – we’re engaging in this discussion early on to make sure that we at least have the basis for policy and structure to guide these discussions as these new use cases pop up. We really need to have inclusive frameworks to make sure all this can be handled.

Question: I wanted to ask you regarding still some autonomous weapons systems. As you know, for instance, the U.S. Navy is already operating autonomous working – what they call sub hunters, submarine hunting vessels, who can operate for several months autonomously on the sea. How do you combine that kind of developments with the human responsibility all the time? Because these developments are there; DARPA is very much invested in this. Could you explain a little bit more on that issue?

Lt. Gen. Shanahan: I – first, most important starting point is to make the distinction between autonomous systems and AI-enabled autonomy. They are two different things that tend to be conflated. The Department of Defense has semiautonomous and, to some extent, autonomous systems, and some of these have been used for 30 years, and some element of autonomous systems – say, the guns on a ship, a Navy ship, that protect from close-in attack that can be operated in a semiautonomous – in some cases missile systems even autonomously for defensive purposes. But now we start adding AI-enabled autonomy. That is where the process by which we will go through test and evaluation, validation and verification, and applying our policy principles that the department has in place already. Well before AI became – became sort of the hottest commodity on the market, we have a policy document on autonomy in weapons systems that governs this, and we will abide by that now as we apply the AI-enabled piece of this.

There is a tendency sometimes, and I say it’s an unfortunate tendency, for people who want to jump straight to this – and I know this is becoming a cliché, but “killer robots,” and I say these lethal autonomous weapons systems, which is right now for the Department of Defense not something that we’re working actively toward. We are working towards autonomous systems and AI-enabled autonomy, but the idea of these sort of unsupervised, independent, self-targeting systems is a future which is not something that any commander I’ve ever worked with, worked for or been part of a command and organization myself is interested in, in sort of “killer robots” with self-agency roaming indiscriminately. That’s not something we’re interested in. We have humans that will be at some point in the loop, or on the loop, or outside the loop.

These are the things that we’re working through right now, and the questions of the appropriate levels of human judgment are one phrase that we use in this policy world, but the other is meaningful human control. Those are areas we’ll work through on some of these lower consequence mission use cases. We’ll also do tabletop exercises and experiments in gaming to understand what are those principles and policies that we have to work our way through before we get to the point that you’re talking about, is fully autonomous systems that also have AI-enabled capabilities that might be weapons capabilities. We are a long way from that. We know that future could be there, so we’re working our way through all these other questions before we ever get to that point.

Mr. Mulchandani: The Defense Innovation Board is a board of independent experts in the area that the Defense Department, 15 months ago they started on a project to come up with a set of broad principles that the DOD should abide by, and they’ve just released a report – again, available online, great read. But it outlines five core principles that the Defense Department should abide by and consider as it’s building out its systems, and literally the first one is governance. It’s about human oversight, human in the loop, and that is a principle that goes directly into all of the systems. And the distinction that the General brought up between right now, one of the other points that the DIB report makes which very clearly says AI and autonomy are getting very, very confused right now. A vast majority of the AI that’s being applied today is in machine learning, clustering algorithms, image recognition, decision support to make us better, faster or more accurate, but in no cases is it taking over the responsibility for any of the work that anyone does. Even at commercial industry we wouldn’t be doing that. That’s absolutely still the case here at the DOD.

Question: Will we witness any kind of cooperation between Russia and China in this field ?

Lt. Gen. Shanahan: We are seeing very clear evidence of Russia and China cooperation on artificial intelligence. Like any two countries, they don’t necessarily have all the same interests so there will be differences in how they approach it. There’ll be concerns by one country and the other. But I do see, and we do see, just very clear evidence that they are working together in joint ventures, partnerships, artificial intelligence development of capabilities.

So yes, we see that. It’s concerning to us. But we – the whole – one of the primary reasons we’re here this week is these discussions with NATO allies and partners, European Union, and the same conversations that we’ll have with our partners and allies in the Indo-Pacific is to ensure that we take an approach that abides by the same principles we have lived by as we’ve developed this technology for as long as we’ve had a Department of Defense around.

I think we actually have time for three more questions, if we can do that, and then we’ll wrap that up.

Question: The White House recently released a statement saying that with regards to future – the future regulation of AI, “Europe should avoid heavy-handed innovation-killing models, and instead consider a similar regulatory approach” to the U.S. What are the ramifications of the bloc adopting a tougher regulatory stance in AI, and don’t you think that a more stringent approach, regulatory approach, would help mitigate some of the security concerns regarding suppliers of equipment emanating from totalitarian regimes worldwide?

Lt. Gen. Shanahan: I think the most important starting point for the answer to that question is let’s separate the two things on security and supply chains, which is a problem that we are addressing, and it is a serious problem about everything from where the chips come through – come from and designed and developed and fielded, to how the data is produced, how the data is curated and stored, all the way to the security of physical weapons systems that might now have AI-enabled capabilities. So there is a lot going on in not just the Department of Defense but across many countries on how to do better at protecting the physical aspects of a supply chain.

That I take – we look at that differently than we would on this other part of the regulations and frameworks behind it. The White House recently released, within the last week, and some of you I’m sure have seen it, sort of the principles for AI for outside the United States Government, looking at regulatory and non-regulatory approaches. Our starting point and the White House’s starting point is light-touch regulation wherever possible. The last thing we want to do in this field of emerging technology moving as fast as it is, is to stifle innovation. Over-regulating artificial intelligence is one way to stifle innovation and do it very quickly.

Now, we realize that self-regulation will not work everywhere all the time, so what are the – what are the right combinations of self-regulation, government-enforced regulation, and how do we work together as an alliance with NATO and with the European Union to find common ground? I think just in the discussions we’ve had in the last two days, there are far more commonalities than there are differences, especially when we talk about principles of artificial intelligence and the ethical and safe lawful use of it. So we’re careful on the regulation piece. I think there is a grave danger of over-regulating and stifling innovation, but we also realize the technology is so immature and new that there are risks introduced by bringing these capabilities. So what can we do to ensure that we minimize those risks, or mitigate those risks, turn the unknowns into the knowns, and then come up with a mitigation plan?

Question: What ethical assurances does the military have in order to champion and give private sector tech companies the confidence to work with DOD; a position seemingly at odds with many ethical demands of younger employees?

Lt. Gen. Shanahan: I’m particularly well-qualified to answer this, having led Project Maven for a couple of years. And anybody who’s followed Project Maven understands the relationship with Google and what happened at the end of that, which so many lessons learned on both sides. So there’s – so from our side, I think Google had as many lessons, if not more than we did, and this applies to any company we’re working with right now.

This idea of trust and transparency – how much can we tell what we’re doing at the Department of Defense? My finding, as I’ve been working this now for about three years: it’s a lack of understanding of what DOD is doing and why we’re doing it. More often than not, there is an assumption about what the Department of Defense is doing, which is just wrong. It’s inaccurate, because nobody’s ever explained what the department is doing. We have to balance sort of our operational security and how much we reveal, but I think we can do that in ways that will make people more comfortable – never perfectly comfortable. I think some people just don’t like the idea of military use of artificial intelligence. As a dual or omni-use use technology, you will not keep it out of the military throughout the world. This is just a classic case of a technology that starts in commercial industry and is going to promulgate, and rapidly, throughout military. So how do we get it right?

So this idea of trust and transparency and explaining what we’re doing and why, that’s made a difference just in the last year the more we’ve been willing to talk about our approach to AI-enabled capabilities. And also there is a little bit of a generational – at least in the United States – a lot of people have never worked with the United States military, have never known – truly have never known anybody that’s worn a uniform, and they don’t understand what the Department of Defense does. They grew up in a commercial industry that is doing it for online sales. They don’t know what we’re doing it for.

Mr. Mulchandani: I mean, what the General mentioned is absolutely true, which is California and Washington, D.C., are on different sides of the coast. They may as well be on different planets in the sense of just the level of work and contact that the two industries and organizations used to have has been very limited historically. And there was this sort of throw-it-over-the-fence model where we threw technologies over and they just got used in this black box, and nobody knew what was going on.

The level of transparency that the DOD is now taking towards the working we’re doing around AI is astonishing. I mean, Gen. Shanahan has been on the road explaining the work we’re doing – the ethics work, the input from industry, the level of contact that we have ongoing with industry. The JAIC’s mission and work is almost entirely commercial. We almost build no technology inside. And having that contact and sustained contact and building trust and transparency takes time, but we’re seeing some incredibly great work with industry and it’s just going to get better.

So I think that’s the sort of the key piece, is the trust, transparency, building that out is going to be the basis for it. And there are things that we’re going to be working on that we can’t discuss outside, et cetera, but rest assured, the contract with society is still the legal basis for the ethics work and everything that’s all out there. And we’ll be enforcing that legally, but also just philosophically, that’s the way the department works. So it’s been really refreshing after such a long career in tech to be on the other side of this and seeing how it is, and there are really not a lot of mysteries on this side.

Lt. Gen. Shanahan: And I’ve also found just doing this is having come up with a common vocabulary, what we say and what others hear are two different things sometimes. So a taxonomy and an ontology of what we mean when we say – just artificial intelligence by itself brings a dozen different definitions very quickly. So resetting the baseline so that we’re talking a common language is as important working with NATO and the European Union as it is anybody else. So that’s a great starting point.

Question: Very interesting that you mentioned the different AI projects that are already underway, and I wondered – you mentioned humanitarian aid, aircraft predictive maintenance, and I wondered which of the few that you mentioned you’re seeing the most progress on with applying AI. And could you also talk a little bit about some of the companies you’re working with on those projects?

Lt. Gen. Shanahan: We prefer not to get into which companies we’re working with, and that’s mainly to respect their preferences. Some I’m more than happy to mention them, but if I mention one and don’t mention the others, I just – I’d prefer we stay away from that.

On the projects we’re working on right now, both on the humanitarian assistance, disaster relief, predictive maintenance, they’ve been – I’ve been impressed by the results, but they’re also sort of what I would call foundational results. Just part of this is as we’ve built out this organization, getting muscle memory or getting reps at doing artificial intelligence projects, to get credibility and expertise so that we become known as the center of excellence for artificial intelligence, for fielding AI. Very careful not to step into the great work that continues to go on in research and development through DARPA and the research labs, the national labs. Wonderful work continues to go on. I think we actually need more of it. So this is about fielding.

But what we’ve learned on those other projects is I think it’s the art of the possible and what is feasible. My experience with AI so far has been science fiction for most people because they’ve never seen it in action, and yet they use it on their personal electronic devices 100 times a day. Just by showing – for the two examples on HADR is wildfire line/perimeter mapping. Imagine the differences that can make in a fast-moving, unfolding crisis scenario where the current method of mapping a fire line is manual: acetate, back of a pickup truck, grease pencil and doing it that way. And it takes potentially hours to get each subsequent update. That is an archaic way of doing business – good as a backup but not where technology is. So we’ve seen some very promising results in fire line/perimeter mapping as well as in flood – flood damage assessment, road obstruction analysis. Some of these are commercial technologies available today, some are being a little bit repurposed for the purposes of what we were asking for, and some have been by our academic partners, which has been very helpful.

On predictive maintenance, again, starting with a narrow case of an H-60 helicopter in one of the services – in this case, the Special Operations Command – showing promising initial results to say this part is likely to fail in this many hours in the next number of flights. Very good, encouraging, but still not good enough. So what we’re doing is getting the building blocks, knowing that the whole world of AI, especially on these algorithms that we field, is dependent on continuous integration and continuous delivery. They have to be updated faster and faster, which for the Department of Defense is a new way of doing business for the most part. I came from a background in which we were doing block upgrades of big weapons systems in five-year increments that were so far behind by the time you fielded that essentially some parts of it were obsolete. That won’t work in this environment.

So we’re trying to understand all the elements of the AI delivery pipeline from data reception to data curation, data management, data labeling, build an algorithm or a model off of an algorithm with data, test and evaluation, integrate it to weapons systems, and then on and on into continuous integration, continuous delivery. Every step of that is relatively new for the Department of Defense – it’s brand new for many people, but what we’re doing is building those repetitions so we understand what it takes to do that in every other project.

So I actually feel very pleased by the results, limited as they are. I will never be the person that overstates the results. But what we’re learning from those will allow us to accelerate in all our other different projects that we’re going to take on over the course of the upcoming year of 2020, which I think I’ll be calling it the year of AI for DOD because I think we’re going to see – we’re going to see this happen faster and faster the better we get at it.

Mr. Mulchandani: Yeah, and just to add to what the General pointed out is as a big believer in the sort of scaling curve or the innovation curve, where you end up starting slow and things don’t look like they’re breaking through, and then all of a sudden all the right conditions come through: the algorithms get tuned, the data lines up, the processing power kicks in, and then you end up with these sort of inflection points where these projects sort of get breakthroughs.

So we’re seeing a very similar pattern here of where, for instance, when the JAIC started these two projects as the initial two projects that the JAIC started, progress was slow and it’s kind of moving along, but we’re now seeing commercial industry getting into certain verticals and areas where there was very slow progress to begin with, but the cycle times that we’re seeing the improvement are shortening dramatically. And so AI as a market is very hard to characterize. You can’t talk about it as a single market. You have to pick literally an algorithm or a vertical by vertical and see where things scale. And at the JAIC what we’re doing is really focused on areas where there’s a lot of promise, and making initial investments very much like a venture capital firm investing in a market, but then allowing commercial industry to sort of take over or build those solutions out to scale, and then being able to field them becomes a very, very interesting thing to move to.

So both of those markets we’re seeing that very encouraging, and in 2020 we feel that we’re going to get to scale in both of those spaces.

Lt. Gen. Shanahan: And I’ll close that out by saying my experience with Project Maven and where we are today in the JAIC, the reason Maven is now accelerating and about a two-year head start on the JAIC is because of user feedback. Taking an initial product that is less than high-performing – and acknowledged that way by everybody, by the developers, by the people receiving it – but the feedback from the users and the operators is what is so critical to moving faster and faster and making that feedback part of the development lifecycle so then you have a better algorithm constantly fielded. That – this idea of user feedback, that’s what we’re learning from our initial projects, and we’re getting better and better at that to the point where we start with that conversation with users on the very front end so that we don’t try to hand something to them a year down the road and they’ve never seen it before. That is a recipe for disaster.

Mr. Mulchandani: And these are best practices that we’re pulling from industry, the idea of good – great product management, specifying products up front with great detail, but then the whole DevOps movement that has really kicked into high gear in terms of iterating with the users and small functionality that gets actually pushed out in continuous increments, allows us to get to a level of speed that we didn’t have before. And there’s a lot of changes going on at the DOD that are supporting this – cloud infrastructure, obviously things like JEDI, DevOps movement and adopting those practices in the way we build software has been – it’s really going to change the game not now but also in the future for the DOD.

Lt. Gen. Shanahan: As I said, our reason for being out here this week is this is such an important dialogue to have right now within NATO but also with the European Union. It’s an incredibly important technology. It will change the character of how our militaries fight in the future. But we have a long way to go, and starting with sort of a common framework of what’s an ethics-based discussion, what’s a human-centric approach to artificial intelligence – there is so much more in common than there are differences. We like to focus initially on the areas of commonality and work our way through the differences.

There are inevitable differences in how we approach everything from data regulation to protecting our data and intellectual property protection. But this discussion is beginning, but it is just the start of what I think will be years-long, close collaboration and cooperation between NATO and the European Union as we work together on, I think, one of the most important technologies that we’ve seen in a long time. It is just a technology; it’s an enabling technology. It’s us – up to us to figure out how really that we use it to make it the most effective and efficient capabilities that we can put into our respective militaries and the rest of our respective governments.